Written by

Published on

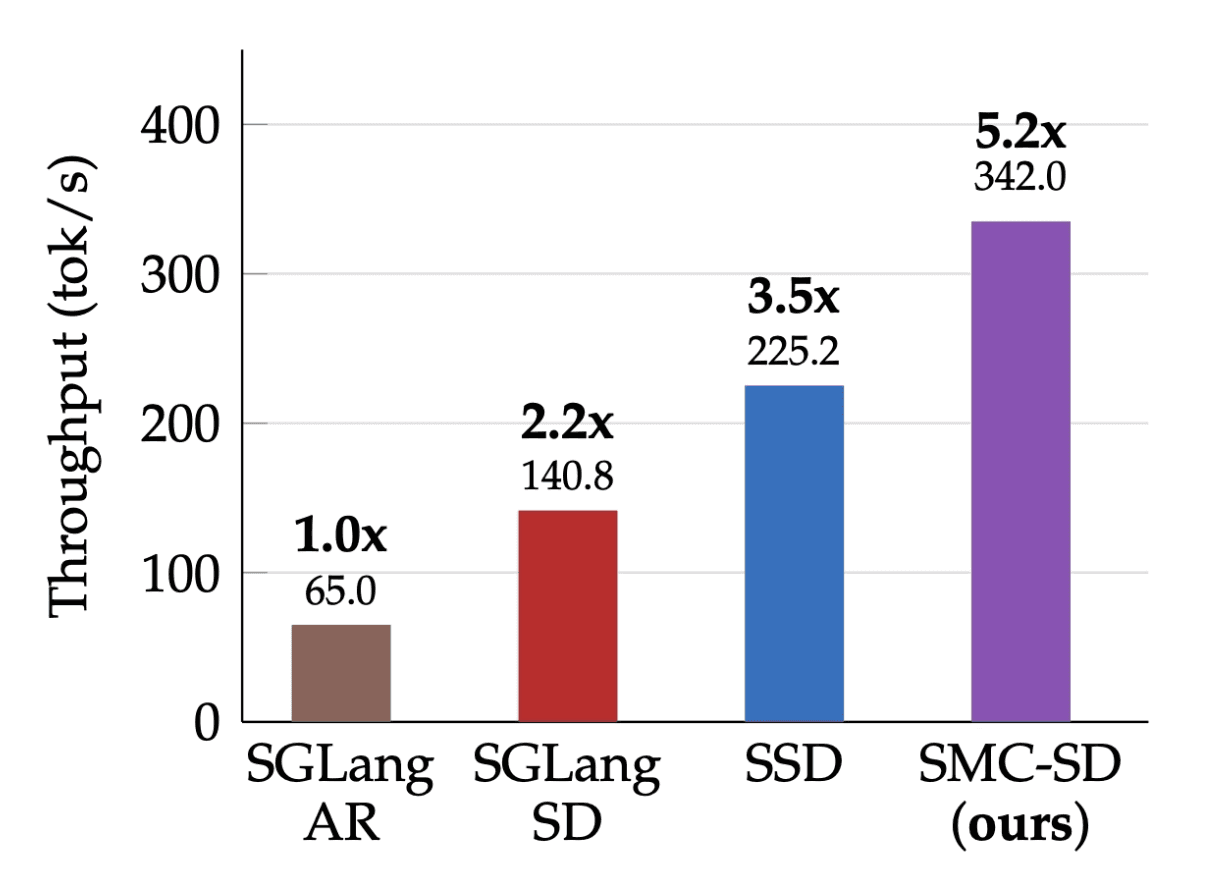

TLDR: we introduce Sequential Monte Carlo Speculative Decoding, an algorithmic optimization that achieves SOTA results in tok/s on GPU. We show test results on NVIDIA H100. Preprint and code are both available.

Today we're open-sourcing SMC-SD (sequential Monte Carlo speculative decoding), a new SOTA approximate inference algorithm developed in collaboration with researchers at Cornell, MIT, and ETH Zürich. On a Llama 70B, across 4 H100s, SMC-SD delivers:

5.2× throughput over autoregressive decoding

2.36× over state-of-the-art speculative decoding (SGLang)

Within 3% of the target model's accuracy across reasoning, instruction-following, and coding benchmarks

Makora’s singular mission is to automatically unlock the peak performance of AI hardware. To this end, we’ve invested heavily in automated tooling focused on delivering kernel optimizations, inference engine optimizations, and algorithmic optimizations. This is to the benefit of all modern AI use cases, from RL training rollouts, multi-turn tool use, and agentic coding, which live and die by inference throughput. We’re particularly excited about techniques like SMC-SD because the “roofline” of algorithmic optimizations is much higher than those of layers of the stack. Below, we dig into the results and analysis.

Standard speculative decoding is exact rejection sampling, the case where you want to sample precisely from the target distribution. Once you reframe the verification step as importance sampling, inference acceleration becomes an instance of probabilistic inference. That reframe does two things. First, it gives you a knob: trade a small, bounded amount of approximation error for substantial throughput gains. Second, it unlocks a class of sampling targets that standard speculative decoding fundamentally cannot express like reward-weighted policies and tempered distributions over entire sequences.

The idea

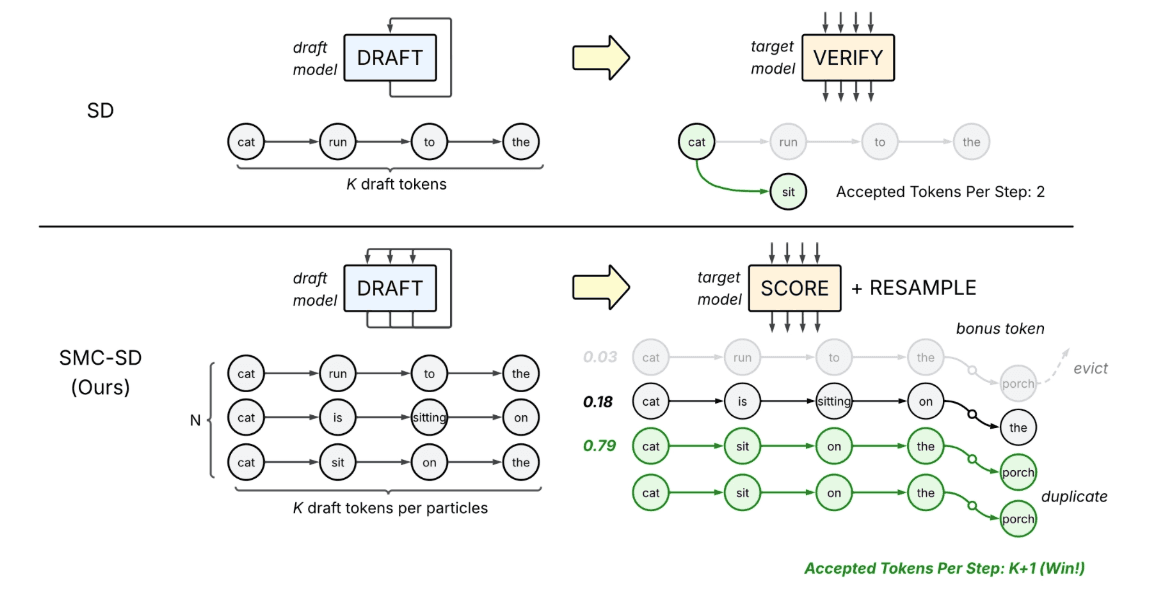

Speculative decoding amortizes the cost of a large target model by having a small draft model propose K tokens, which the target verifies in a single batched forward pass. The catch: verification is rejection sampling, so the first mismatch truncates the block and triggers a KV-cache rollback. When draft and target diverge, throughput collapses.

SMC-SD replaces token-level rejection with importance-weighted resampling over a population of N draft particles. Every round, each particle is extended by exactly K+1 tokens — no rollback, no truncation. Particles with low weight under the target are pruned; high-weight ones are duplicated. The output length is deterministic; the approximation quality is what varies.

Our approach (bottom) compared to standard speculative decoding (top).

In SD, a draft model generates a single sequence of draft tokens. A target model then performs a verification step, accepting a valid prefix of verified tokens while discarding rejected tokens. SMC-SD maintains a set of N candidate sequences (particles). In each iteration, the draft model extends the N sequences by K draft tokens, where K equals 4 in the illustration above. The target model then scores these extensions and samples a bonus token. In the Resample phase, sequences with low score (e.g., .03) are evicted, while sequences with high probabilities (e.g., .79) are duplicated to form the new set of N sequences.

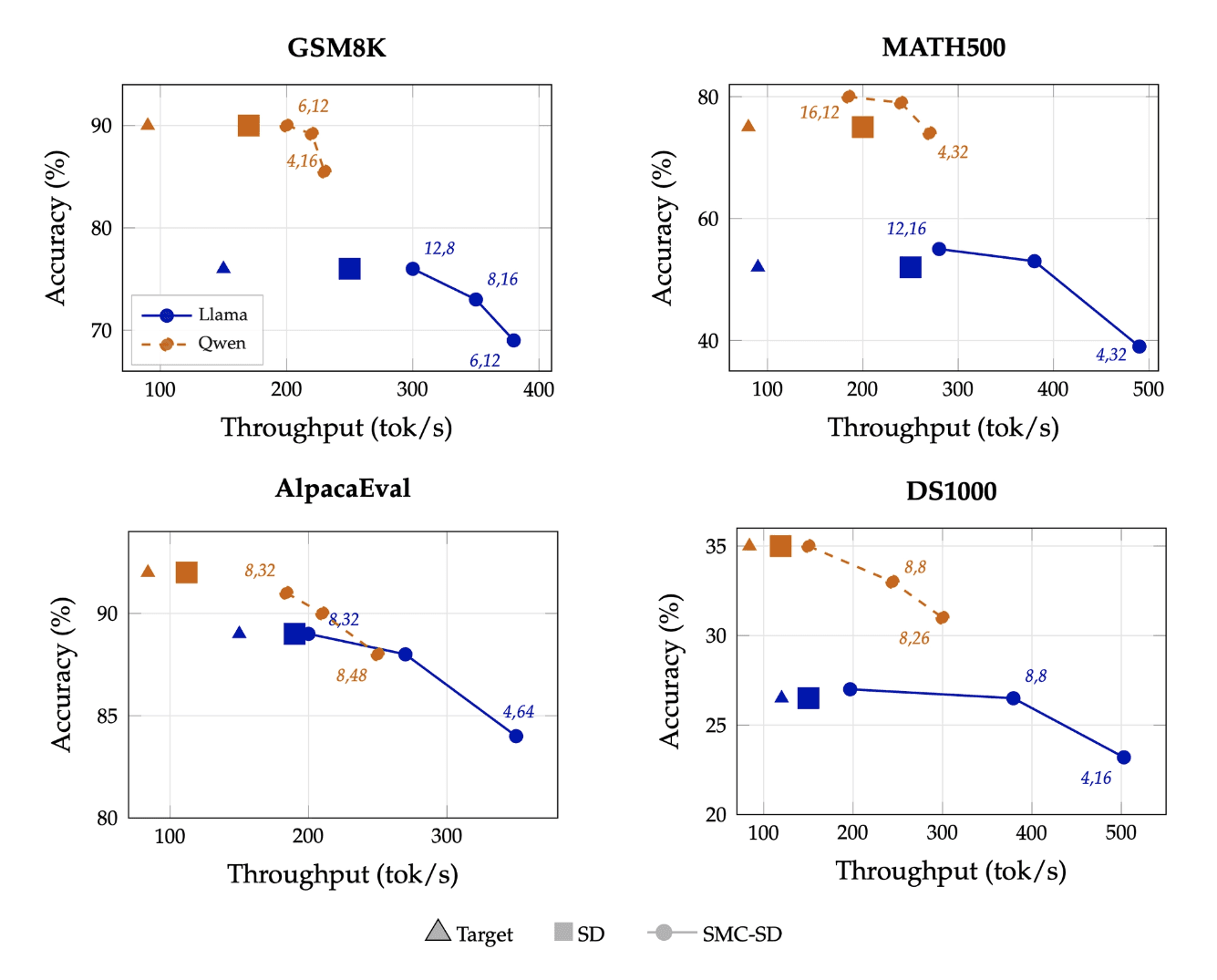

Most recent work on speculative decoding — EAGLE, Medusa, tree-based verification — targets the same axis: draft-target alignment. Better proposals, higher acceptance rates, more tokens per round. These are real wins, and they're complementary to SMC-SD rather than alternatives. A better draft model makes SMC-SD better too. SMC-SD moves along a different axis. When draft and target are well-aligned, it's faster than SD without losing accuracy, extra particles come essentially free in the memory-bound regime, so there's no reason not to use them. When alignment is weaker, SD's acceptance rate collapses and throughput degrades sharply. SMC-SD instead exposes a tunable Pareto frontier: trade a bounded amount of approximation error for substantial additional throughput. Standard SD has no corresponding knob. The two axes compose. Pair a stronger EAGLE-style draft with SMC-SD and you get both.

What's next?

The 5.2× result leaves real headroom against the theoretical roofline. We're actively working on overlapping resampling with drafting, disaggregating draft and target across heterogenous hardware, and adapting N and K dynamically at runtime.

This also rides a hardware trend we're betting on: compute is growing faster than memory bandwidth. Blackwell keeps HBM roughly fixed relative to Hopper while scaling FLOPs hard. Algorithms that spend compute to save bandwidth, SMC-SD among them, are going to matter more, not less, as the next generation of accelerators lands.

See for yourself

Makora’s end-to-end performance engineering platform will apply algorithmic optimizations like SMC-SD automatically, alongside other techniques to improve GPU kernel and inference engine performance. Reach out for early access to the latest and greatest from our engineering team.

ArXiv Preprint: https://arxiv.org/abs/2604.15672

Codebase: https://github.com/abdelfattah-lab/smcsd

Latest

From the blog

The latest industry news, interviews, technologies, and resources.