Written by

Published on

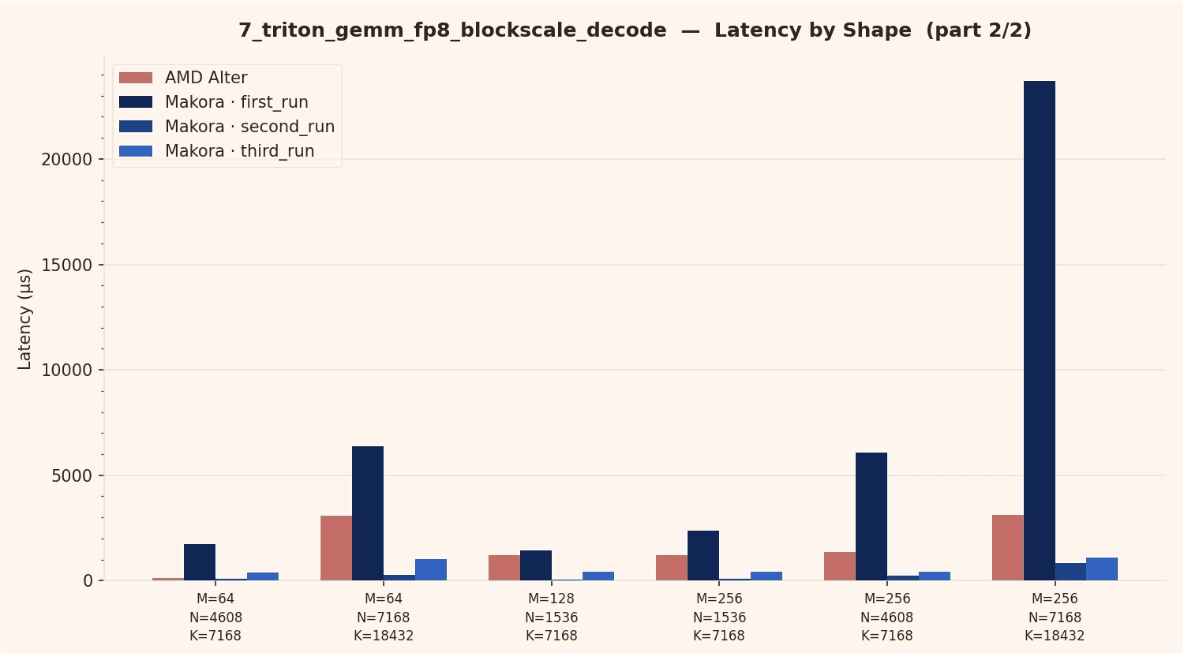

TLDR: This is part 1 of a series on low-precision GEMM kernels on MI355X. We use MakoraGenerate to write fast FP8 GEMMs in HIP. We go over what makes FP8 easy and hard, and release code along with performance results. The resulting kernels beat the publicly available AITER library provided by AMD on a variety of shapes. We'll be exploring FP4 and FP6 next, along with some clever features of the hardware we can exploit.

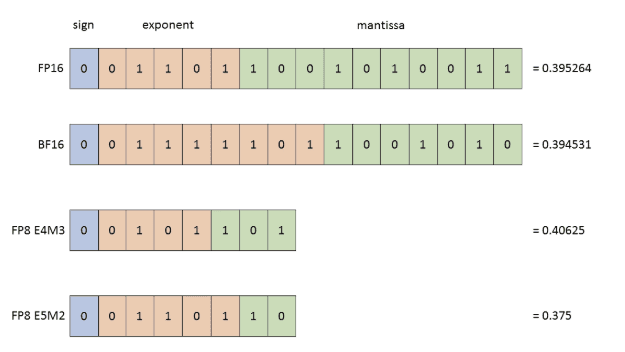

FP8 refresher

FP8 is an 8-bit floating point family of formats used to increase throughput and reduce memory bandwidth pressure compared to FP16 and BF16. The exact dynamic range and precision depend on the specific FP8 encoding. Two FP8 encodings are common in practice:

E4M3 (more mantissa precision, smaller range)

E5M2 (larger range, less precision)

FP8 GEMM is typically paired with explicit scaling. Instead of treating FP8 as a drop in replacement for FP16, you treat it as a compressed representation and recover a usable numeric range via scale factors.

Reference: NVIDIA

Achieving high performance

Most high performance FP8 GEMMs use one of these scaling schemes:

Per tensor scaling

Per row and per column scaling

Block scaling (scales per M block and N block, and sometimes per K block)

Block scaling represents a strategic compromise between accuracy and overhead, offering higher precision than global scaling with lower computational costs than per-element scaling. The MakoraGenerate must reason about these scaling factors because they directly influence both numerical correctness and kernel performance. Specifically, scaling decisions dictate how values are loaded, cached, and applied within the epilogue, or whether they are fused directly into the main compute loop.

Beyond raw compute, performance is governed by the interplay of data movement, memory layouts, tiling schedules, and precision strategies. Since an optimal kernel requires simultaneous shape and hardware awareness, a generate-and-evaluate loop is necessary to navigate these complex architectural trade-offs.

The MI355X (CDNA 4) targets very high peak throughput. In AMD’s published architecture material, peak FP8 throughput is shown at roughly 5 PFLOPs, around 1.9x faster than MI300X (CDNA 3).

AMD CDNA 4 architecture whitepaper:

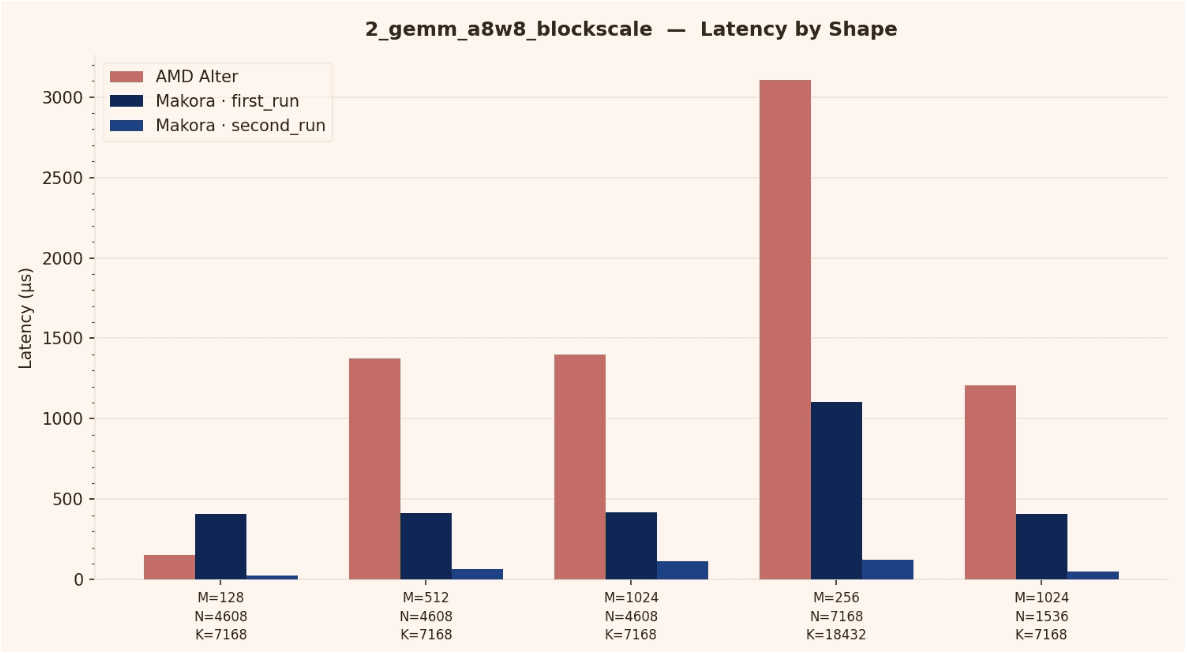

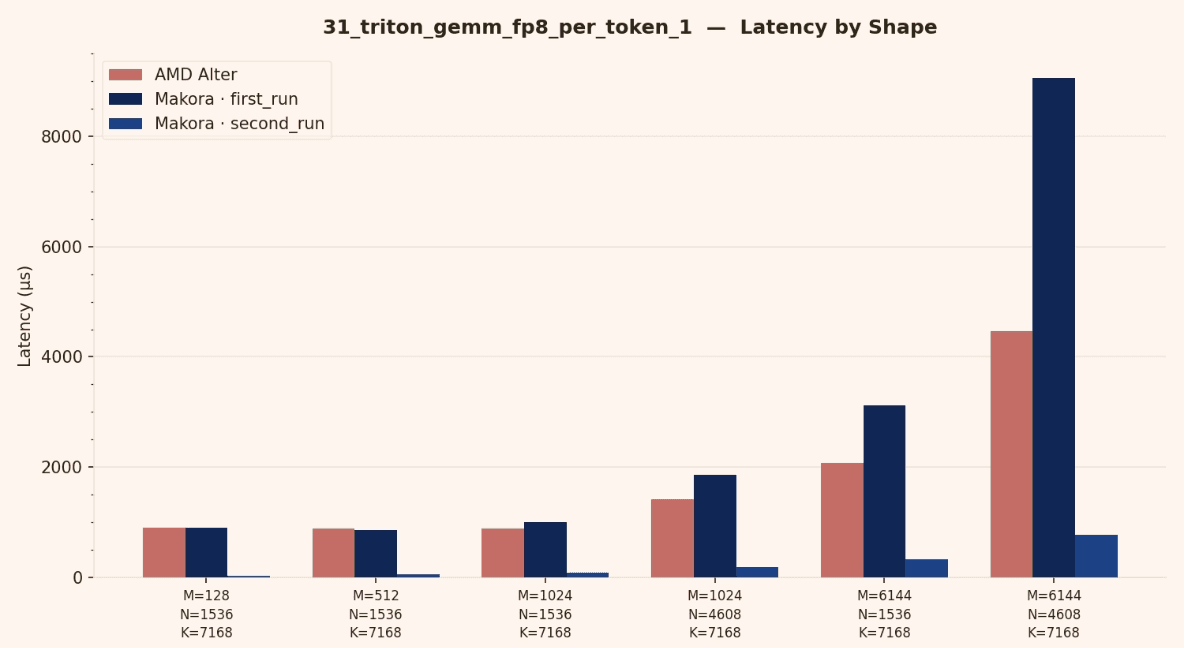

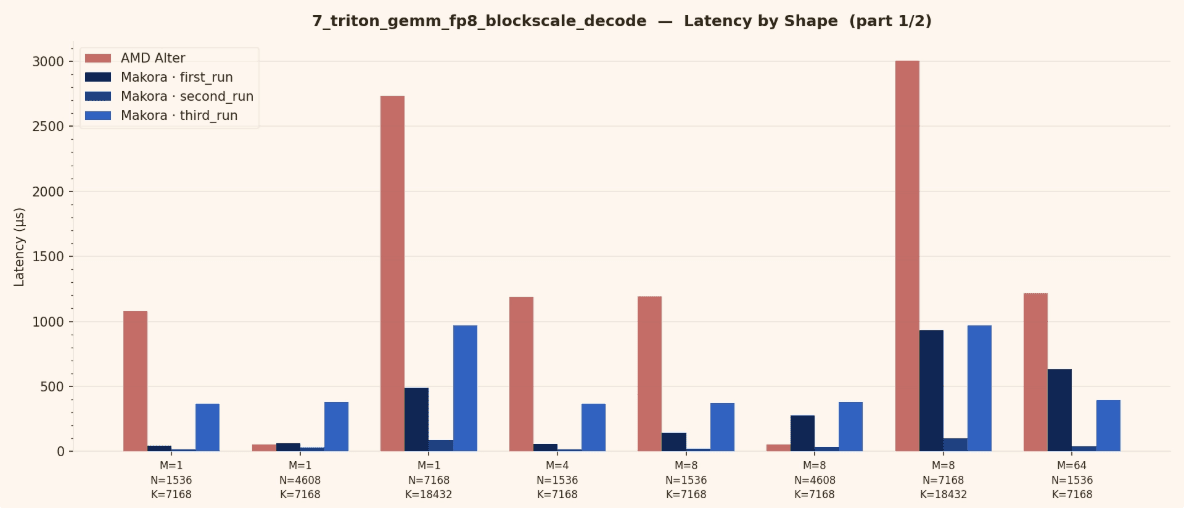

Benchmark setup and results

Hardware: AMD MI355X

ROCm: 7.0

Kernel type: FP8 GEMM

Baseline: reference implementation from AITER

This section summarizes benchmark results and highlights the cases where the agent generated kernels match or exceed the baseline.

Notes on what improved

Across iterations, generation time decreased significantly while performance increased. The agent learns from previous experiments, reuses what works, and keeps refining the kernel.

Average generation time per iteration:

First run: about 6 hours

Second run: about 2 hours

Third run: about 30 minutes to a state of the art kernel

The key takeaway is that the optimization loop is getting faster and more effective over time, as the agent accumulates prior knowledge and converges with fewer iterations.

Code highlights

The snippets below are examples of the kinds of transformations the agent produces when optimizing FP8 GEMM. I will keep adding excerpts here.

This excerpt shows an MFMA inner loop with four independent MFMA calls to increase instruction level parallelism. After the MFMA loop, the code applies A and B scale factors before accumulating into FP32.

Key ideas to notice:

Multiple independent MFMA calls to keep the pipeline busy

Packing FP8 into 8 byte chunks

Scale application fused close to accumulation

Split K, fused heuristics, and dtype details

This excerpt documents a subtle FP8 detail and the correction applied in the kernel.

And the corresponding accumulation into a temporary FP32 buffer for split K:

Latest

From the blog

The latest industry news, interviews, technologies, and resources.